I’ve been to a lot of meetups about AI in the last year. Across all of those there’s been a common refrain that gets repeated by the experts and the newly empowered noobs alike. “If you don’t know how to get what you need out of your AI tool, just ask it.” It’s one of the most powerful aspects of the AI revolution. You can’t ask a hammer how to build a cabinet. You can ask Claude how to build the web app you’ve imagined for the last 20 years.

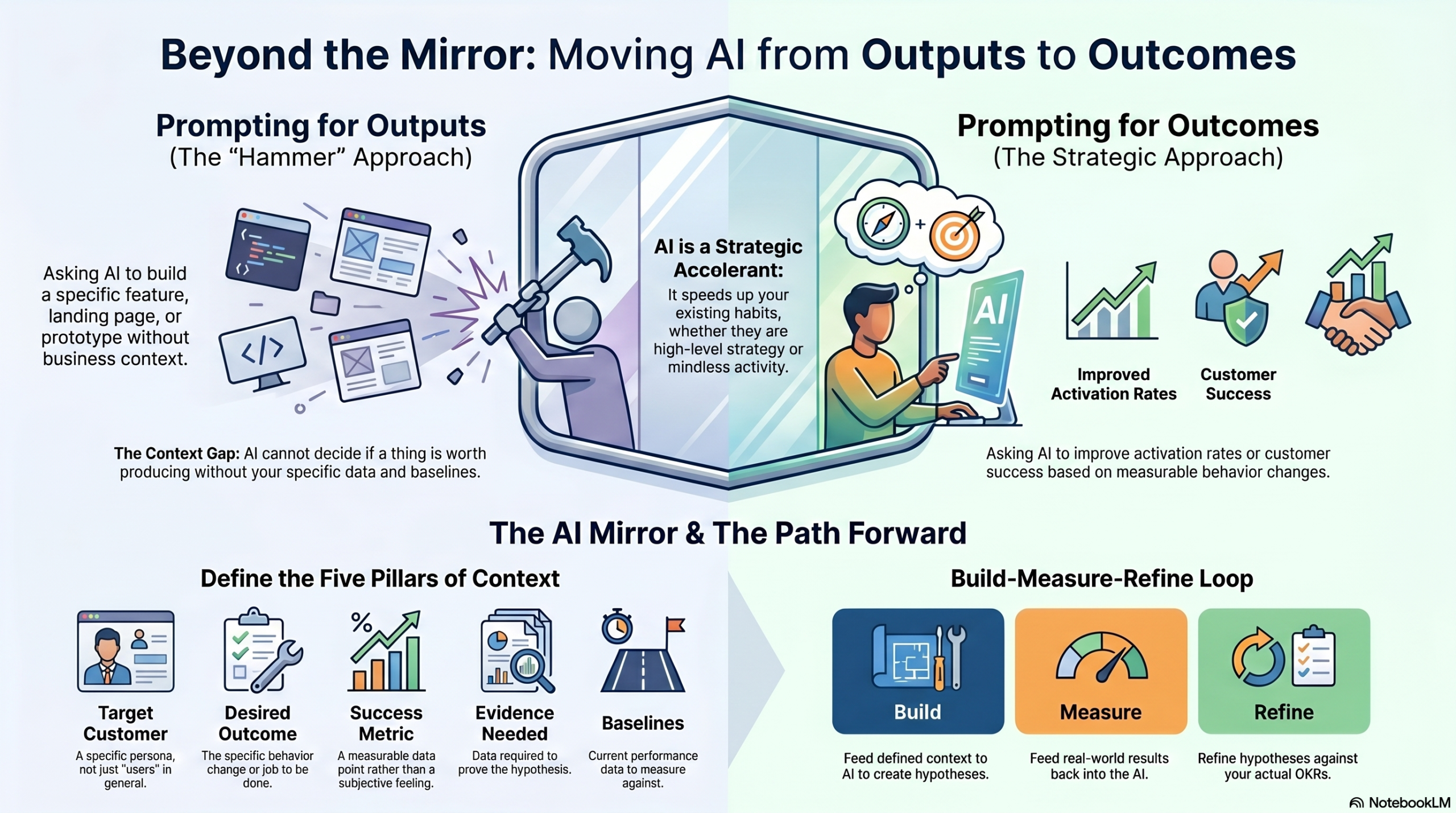

In all of these cases though, the prompt is always focused on creating a specific thing, an output. However, there’s a question worth sitting with — one we’ve started discussing internally lately. What would it look like to prompt AI for an outcome instead of an output?

Try it. Open Claude or ChatGPT and ask it to “build me a feature.” It will. Ask it to “design me a landing page.” It will. Ask it to “create a prototype of an onboarding flow.” It will, and the result will be impressive enough that you’ll forward it to three colleagues.

Now ask it to “increase activation rate by 15%.” It can’t. Ask it to “improve customer retention.” It can’t. Ask it to “make our users more successful at the job they hired our product to do.” It will produce something that sounds like an answer. It’s not likely to be a good one.

AI is great at outputs. Outcomes require something it can’t give you on its own.

The reason isn’t that AI is bad at outcomes. The reason is that an outcome prompt requires you to know what outcome you’re after for which user, in which context, measured how, against what baseline. The model can’t help you with that, at least not without context and data. The model can help you produce the thing. It cannot help you decide whether the thing is worth producing.

This was always true. Frameworks and templates and design sprints were also tools that produced outputs faster. None of them ever told you what outcome you were trying to drive. We used to be able to hide that gap because building was slow enough that the question came up naturally by the time you had a feature ready to ship, somebody, somewhere, had to articulate what success looked like.

AI has eliminated that grace period. The feature ships in an afternoon. The outcome question gets asked rarely if at all.

The prompt that exposes the gap

Most teams are prompting for outputs because outcomes are rarely clearly defined in the first place. What used to be invisible — the absence of clear outcome thinking — is now embarrassingly obvious. The team that used to ship one feature a quarter and call it strategy now ships forty features a quarter and calls it activity (velocity anyone?). The volume has increased. The judgment has been left behind in favor of “productivity.”

AI is a mirror. It doesn’t make teams better at product work. It shows you, with uncomfortable clarity, what your team was actually doing before. If your team had clear outcomes, AI lets you test against them ten times faster. If your team had OKRs that were a list of outputs to ship by a date, AI generates those lists ten times faster. The shape of the work hasn’t changed (yet). Only the speed.

The teams getting the most out of AI right now are not the ones with the best prompt libraries or the most sophisticated tooling. They are the teams that already knew how to write a meaningful outcome before AI showed up. For them, AI is an accelerant on real strategy. For everyone else, it’s an accelerant on the wrong work.

Try this with your team this week

Run this experiment. Open whatever AI tool your team is using. Try to write a prompt that asks the tool to improve a behavior change without providing a specific feature to build. Ask the AI to prompt you for the context it needs to be able to suggest how to achieve that outcome. Make sure that it doesn’t build anything without including telemetry in the build plan.

Specifically, your AI will need to at least know:

- Who the customer is. Specifically, not “users.”

- What they’re trying to accomplish. In their words, not yours.

- What “success” looks like for them (and for you). Measurable, not a feeling.

- What evidence it (we?) would need to see to confirm or deny any feature hypotheses it comes up with.

- What baselines we currently have on that desired behavior.

Now, comes the product work. You’ll have to answer these questions prior to any work getting done by the bot. And if you don’t know the answers you’ll have to go get them or point the AI towards the systems that can provide the baselines it needs. The AI mirror clearly reflects that whatever the bot was missing from that prompt is what was already missing from the product conversations your team has been having.

Once the AI comes up with a series of hypotheses that might help you achieve your key result, ask it to justify each recommendation objectively, based on the data you provided. Compare the AI-produced hypotheses with your own ideas and then start building ensuring that everything you push to production can be measured. As the results start coming back, feed them back into the AI and ask it to compare the results with its original set of recommendations.

The desired end state here is a continuous collaboration between your product team and your AI tools to only push new ideas to production that you believe will help you hit your OKRs. The good news is that, unlike your stakeholders, your AI tools won’t forget about your specific goals. Instead they’ll continue to help you refine your approach towards delivering something your customers actually find valuable.

P.S. – We’ve got a free webinar coming up on May 13, 2026 exactly about this topic. Sign up for free here.

One response to “What If We Prompted AI for Outcomes Instead of Outputs?”

[…] trying to produce needs to exist as the success criterion before the AI generates a single option. “What if we prompted AI for outcomes instead of outputs?” is now the default question, not the optional […]