Steve Blank, godfather of customer discovery and lean startup, recently published his astonishment at what his latest cohort of students in Stanford’s Lean LaunchPad managed to do in a week vs other cohorts. He goes further to diagnose the impact that AI had on the cohort’s productivity and ultimately, their ability to think critically about their work and the appropriate next steps.

Eight student teams showed up on the first day of class with MVPs – minimum viable products – that would have taken months to build a year ago. They were using all the latest tools like Claude Code, Replit, v0, Granola, Perplexity to collapse the Customer Discovery process into a single weekend. Steve and his instructor team watched the timeline they’d spent a decade and a half teaching get compressed by a factor of ten. Then they noticed something stranger.

“The bottleneck for our student teams has moved from needing the resources to build high-quality MVPs to judgment: how to choose the right problem, how to read user signals correctly, and deciding what to build next.”

The classroom version of that sentence is hopeful. Stanford students are bright, well-supervised, and have an instructor team trained in Customer Development standing five feet away. They will eventually figure out that more MVPs is not the same as more learning. A semester is plenty of time to recover from an overreliance on velocity that outruns validation.

The enterprise version of this phenomenon is less hopeful.

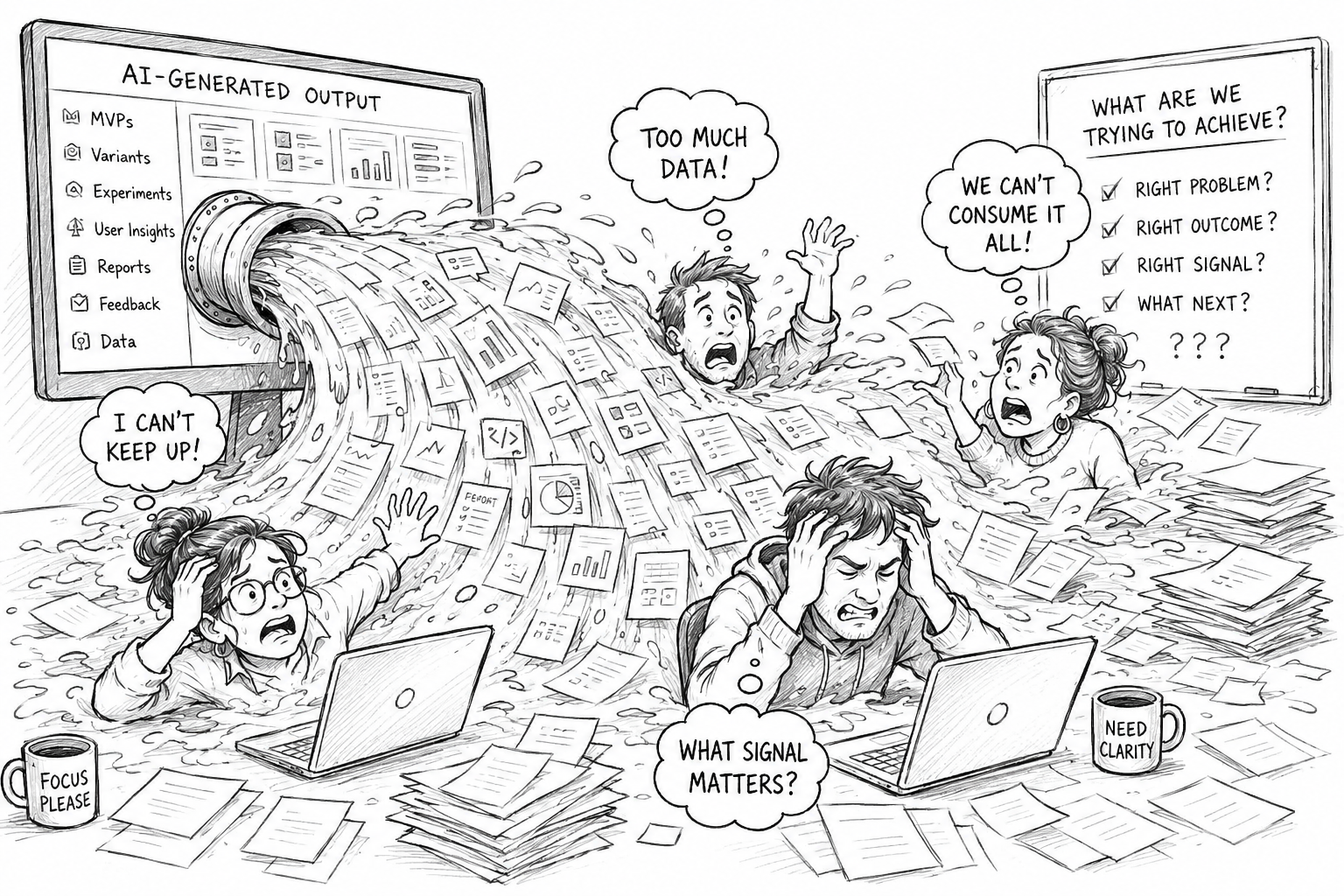

The accidental denial-of-service attack, at scale

This particular quote from Blank, is perhaps the most quotable phrase of the year: AI-built MVPs have become “an accidental denial of service attack on the search for a repeatable and scalable business model.” Three teams shipping ten MVPs each in a single week overwhelm the team’s ability to read what any one of them is actually telling them. The abundance of perceived productivity becomes its own noise blocking the teams from having the time and bandwidth to process what each new data point is telling them. The net result? The team outsources more and more of the work including analysis and communication to AI until the overall output of the entire initiative is AI slop.

In a classroom, the cost of that DDoS is one semester of confused students who eventually learn from their mistakes (hopefully) and evolve their approaches.

In the enterprise, the cost is different and much more consequential. PM teams are now generating more options than they can validate, more variants than they can compare, more “wins” than they can attribute. And nobody in the room can agree on which signal matters, because nobody decided what the signal was supposed to mean before the AI generated five versions of it. The velocity creates the appearance of progress without producing any evidence of it.

This has nothing to do with whether your team is using Copilot, Claude, ChatGPT, Gemini or whatever UI layer they prefer that sits on top of these models. This isn’t a tooling problem. It’s a product management fundamentals (or lack thereof) problem. AI is increasingly making it impossible to ignore.

Blank calls it a lack of judgment. It’s actually a lack of discipline.

The judgment Blank is pointing at isn’t a personality trait, some magical “sense” only a few have or a senior-leader’s hard-won instinct. It’s a practitioner-level stack that AI tools don’t build for you and most product orgs aren’t currently equipped to create and operate.

It has three layers:

- Outcomes, defined ahead of time. Not features. Not user stories. Not MVPs. The change in customer behavior or business result the team is trying to produce needs to exist as the success criterion before the AI generates a single option. “What if we prompted AI for outcomes instead of outputs?” is now the default question, not the optional one.

- Prompting that names the outcome. “Generate five payment workflow variations” produces five workflows. “Help me lift checkout conversion by 15% in the next six weeks” produces a different conversation and a different set of options to evaluate. The Three Habits I wrote about last week — defining done before generating, validating before scaling, deciding before iterating — are the practitioner expressions of that shift. Tool fluency is the price of admission. Decision quality is the moat.

- Culture that rewards stopping. In a classroom, students can stop and ask “is this the right problem?” because that’s literally what the class is for. In an enterprise setting, the team that stops to ask that question is the team that gets the “why isn’t anything shipping?” review on Friday. Until “we paused and re-scoped because the outcome wasn’t right” becomes a win category in the team review the velocity will keep outrunning the validation in an unsustainable and wasteful way. Forever.

The MPO is the right direction. Let’s make it real.

Steve introduces a term in the article: MPO — Minimum Productive Outcome — as the successor to MVP. I think this is a step in the right direction. The label, by itself, isn’t a practice yet. But it’s closer to where the work needs to go than the broadly misunderstood term MVP ever was.

A real MPO isn’t a product. It’s a written, agreed-upon change in human behavior the team can validate in a defined time window, paired with the smallest amount of build that lets you collect, read and analyze the signal. The difference between MVP is that it was an experiment that had to be brought into existence. That took time and discipline that AI-powered teams seem to lack – either by choice or by executive directive. The MPO aligns the entire product development effort around the success criteria. The AI will continue to create the outputs but if they don’t move the needle on the shared MPO, no value was delivered. That’s a hard discipline and it’s something AI has made non-negotiable.

Customer discovery requires human contact and analysis

As long as humans continue to consume the products and services we make, the best way to understand if we’re delivering a valuable product to them is through direct contact. Collapsing the timeline around the work that needs to get done prior to making that human contact is one of the great benefits of the AI tools available today. The thing we can’t outsource to AI is observation, conversation and critical thinking. To do those things we need time to think, work through the data we’re collecting and determine where there are actual signals of value from the market. Production has forever been seen as a measure of progress. In a world where the cost of producing, for now, is insignificant, value is measured by finding that elusive minimum productive outcome.

Leave a Reply