(Want to get this article in your inbox? I publish one article a month and share it in my newsletter first. You can sign up here and join 40k other subscribers.)

Over the past 10 years we’ve been lucky to have a tremendous amount of content, practice and experience shared to help us build and design better products, services and businesses. One of the core concepts being adopted broadly from this body of work is the hypothesis — a tactical, testable statement used to help us frame our ideas in a way that encourages experimentation, learning and discovery. The idea is that we write our ideas, not as requirements, but as our best guesses for how to deliver value and with clear success criteria to tell us whether our idea was valuable and we delivered it in a compelling way.

While there are many templates, the one I’ve been teaching for the past few years looks like this:

We believe

[this outcome] will be achieved if

[these users] attain [a benefit]

with [this solution/feature/idea].

I like this template because the act of filling it out is the first test of the hypothesis. If you and your team can’t complete this template in a way that you believe that’s a good indication you shouldn’t be working on that idea. But, assuming you’ve come up with some good ideas, you end up creating a new challenge for the team.

So many hypotheses, so little (discovery) time

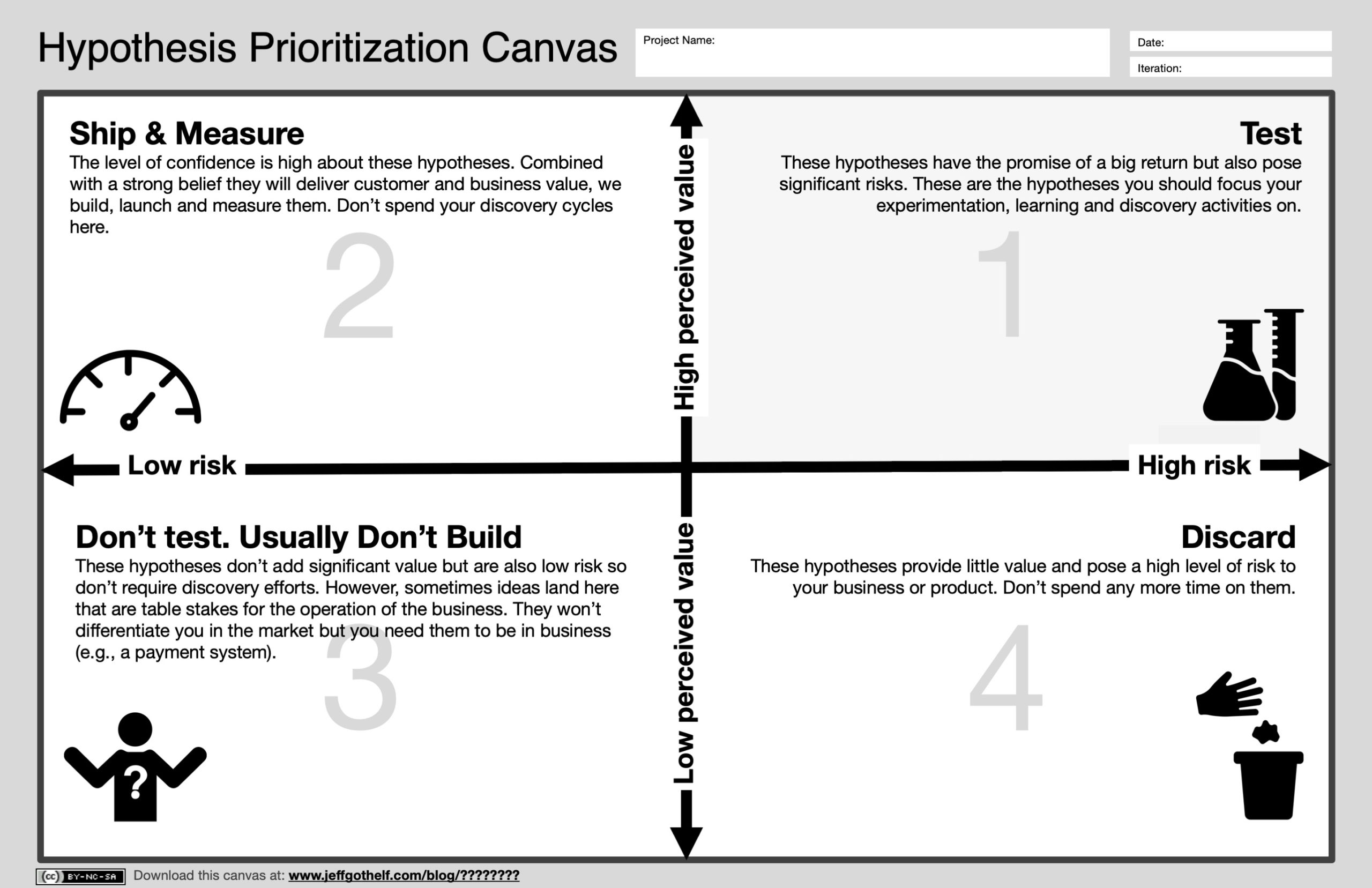

If you only have one hypothesis to test it’s clear where to spend the time you have to do discovery work. If you have many hypotheses, how do you decide where your precious discovery hours should be spent? Which hypotheses should be tested? Which ones should be de-prioritised or just thrown away? To help answer this question I’ve put together the Hypothesis Prioritisation Canvas. This relatively simple tool and a companion to the Lean UX Canvas can help facilitate an objective conversation with your team and stakeholders to determine which hypotheses will get your attention and which won’t. Let’s take a closer look at the canvas.

When should we use this canvas?

If you’re familiar with the Lean UX Canvas, the Hypothesis Prioritisation Canvas (HPC) comes into play between Box 6 (writing hypotheses) and Box 7 (choosing the most important thing to learn next). If you’re not familiar with it, the HPC comes into play once you’ve assembled a backlog of hypotheses. You’ve identified an opportunity or problem to solve, declared your assumptions and have come up with ideas to capitalise on the opportunity or solve the problem.

What kinds of hypotheses work with this canvas?

The HPC is designed to work with any hypothesis you come up with. It can work with tactical, feature-level hypotheses as well as business model hypotheses and everything in between.

How do we use the canvas?

The canvas is a simple matrix. The horizontal axis measures your assessment of the risk of each hypothesis. This is a team activity and is the collective best guess of the people assembled of how risky this idea is to the system, product, service or business. The challenge with assessing risk is that every hypothesis is different. Because of this, your risk assessment will be contextual to the hypothesis you’re considering. For example, you may have to integrate modern technology with a legacy back end system. In this case the risk is technical. You may be reimagining how consumers shop in your store which is risky to your customer’s experience. Maybe you’re considering moving into an adjacent market after years focusing on a different target audience. The risk here is market viability and sustainability. Every hypothesis needs to be considered individually.

The vertical axis measures perceived value. The key word here is “perceived.” Because this is a hypothesis, a guess, the value we imagine our ideas will have is exactly that, imagined. It won’t be until a scalable, sustainable version of the idea launches that we’ll know whether it lives up to our expectations. At this point we can only guess the impact the idea will have on our business if we design and implement it well.

We take each hypothesis we’ve created to solve a specific business problem and map it onto the HPC’s matrix. Once we’ve completed this process, we assess where each hypothesis landed.

Box 1 — Test

Any hypothesis that falls into this box is one we should test. Based on what we know right now this is a hypothesis with the chance of having significant impact on our business. However, if we get it wrong it also stands the chance of doing damage to our brand, our budget or our market opportunity. Our discovery time is always precious. These are the hypotheses that deserve that time, attention, experimentation and learning.

Box 2 — Ship & Measure

High value, low risk hypotheses don’t require discovery work. These are ideas that have a high level of confidence and, based on our experience and expertise, stand a good chance of impacting the business in a positive way. We build these ideas. However, we don’t just set and forget these solutions. We ship them and then measure their performance. We want to ensure they live up to our expectations.

Box 3 — Don’t test. Usually don’t build.

This is, perhaps, the least clear quadrant because there are ideas that may fall here that have value despite the “low value” indication on the matrix. To be clear, hypotheses in Box 3 don’t get tested. In most cases they don’t get built either however there will be times where ideas land in this box that we need to build a successful business but that won’t differentiate us in the market. For example, if you’re going to do any kind of commerce online you’ll need a payment system. In most cases, how you collect payment is not going to differentiate you in the market. These types of ideas often end up in Box 3. They’re table stakes. We have to have them to operate but they won’t make us successful on their own. In these cases we build them, ensure they work well for our customers but don’t do extensive discovery on them prior to launch.

Box 4 — Discard

Hypotheses that we deem to have low value and high risk are thrown away. Not only do we not do discovery on them, we don’t build them either. These are ideas that came up in our brainstorm that we’ve not realised won’t add the value we’re seeking.

Ultimately the value of the HPC will be realised if and how your team uses it. Take it out for a spin. It’s intended to be a team activity. Let me know how it works for you, where it can be improved and whether you find it useful or not.

I’m excited to hear your feedback.

P.S. — Lots of new events posted on the Events page now. Join me in person in 2020.

One response to “The Hypothesis Prioritization Canvas”

Hi Jeff,

This is really helpful (as always) – thank you.

I wonder if there would be any merit in adding a line to the end of you hypothesis template along the lines of.. “because [of this evidence and scientific theory]

This grounds the hypothesis in existing evidence and established social scientific theory. It might also help avoid the potential pitfall that I’ve seem some business fall in to i.e. assuming that clients are rational actors driven by clear interests, when it might be more helpful to think of them as complex emotional people driven by instincts.