I was on stage in Helsinki earlier this week at ScanAgile 2026, giving a talk called “AI Made It Easy — Who Decides What’s Good?” It was the first time for this talk. It felt good to me – like the room was getting what I was proposing. I was in a good flow and closed strongly. Then we got to the Q&A and someone asked me a question I didn’t have a clean answer to.

“You can quantify the cost of building things,” they said. “But how do you quantify judgment? How do you teach it so that it’s used more often?”

I gave an answer. To be honest, I’m not sure what I said. I don’t think it was a good answer. But the question stuck with me on the flight home.

Judgment was always the differentiator. AI just made it obvious.

I’ve mentioned this before here, the cost of producing products, services, and features is dropping fast. AI has compressed timelines that used to take weeks into hours. That constraint — execution, building, shipping — is largely gone. What’s left is the constraint that was always there, just buried under all the other work: judgment.

Judgment is the ability to prioritize, objectively, what to build, for whom, and why this feature instead of that one. It’s what separates a product manager who just ships things from a product manager who ships the right things with a clear sense of why they believe that decision is right. AI didn’t create this gap. It removed the noise that used to hide it.

AI making judgment more visible doesn’t mean it’s the only differentiator. Brand, timing, execution quality, competitive market assessment, organizational trust — these still matter. But judgment has moved to the front of the line. You can out-execute a competitor less and less. You can still out-think them.

The uncomfortable truth is that good judgment — real product taste — comes from experience and expertise. It’s built over time through customer exposure, failed bets, big wins, hard tradeoffs, and honest retrospectives. You can’t download it. You can’t prompt for it. But you can teach the behaviors that build it.

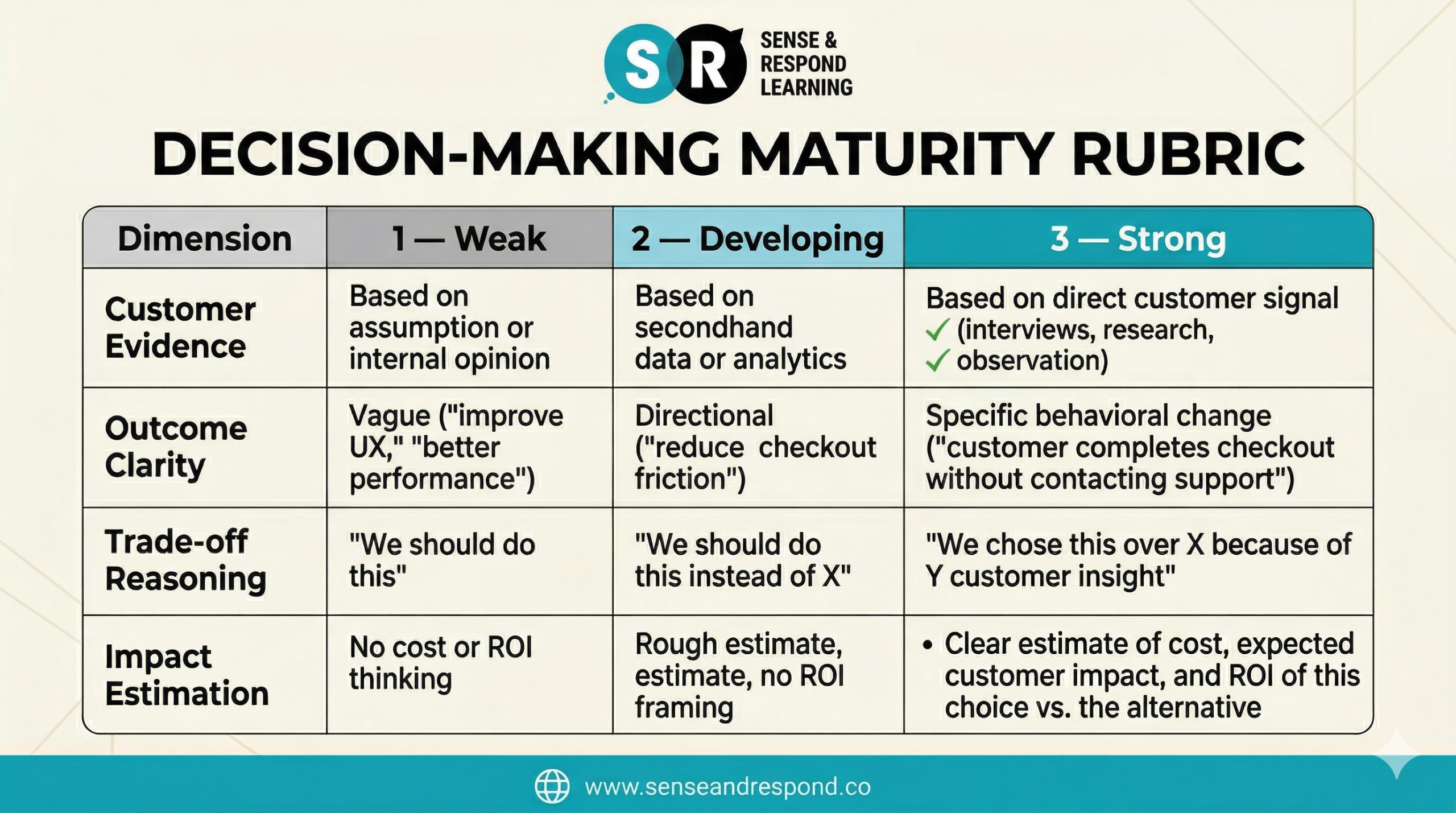

A rubric for making judgment visible

This is what I wish I’d said in Helsinki: you may not be able to put a number on taste, but you can make the inputs to good judgment visible, discussable, and teachable. That’s where a rubric helps.

Good product judgment is grounded in the same fundamentals that have always separated great PMs from mediocre ones. The best AI product managers I know aren’t better because they understand AI. They’re better because they’re good product managers first — with a deep sense of the customer and the business — who also happen to understand AI. The fundamentals still hold.

Here’s the rubric I’ve been thinking through since that Q&A. Use it to evaluate a decision your team just made, or use it as a teaching framework to level up how your team reasons. Each dimension scores 1–3.

A total score of 12 means strong judgment at every level. A score of 4–6 means the decision is mostly assumption-driven — and that’s a coaching conversation, not a performance review.

The value of this rubric isn’t just evaluation. It’s training. When a PM consistently can’t reach a 3 on Customer Evidence, you know exactly where to focus your development investment. The rubric teaches the expected behavior by making it explicit and repeatable. It also makes clear exactly what’s expected from today’s product managers. Over time, those behaviors become habits. Those habits become taste.

Try it this week

Bring this rubric to your next sprint review, your next prioritization meeting, your next planning session. Don’t use it to grade people. Use it to make judgment visible and debatable. Surface how decisions are actually being made. When the reasoning is out in the open, it gets better — for everyone.

The goal isn’t perfect judgment. It’s a transparent look at what makes judgment “good” and what’s expected of your product managers. You’re looking for the kind of judgment that can explain itself, learn from outcomes, and improve over time. Your goal is to move away from “let’s just ship it and see what happens” to a deliberate, high-quality practice of expert decision-making.

Sense & Respond Learning can help bring good judgment to your AI product teams. Our 2026 AI-centered curriculum is built for product leaders who want to go beyond execution and build organizations that can actually decide what’s worth building. Learn more at senseandrespond.co.

Bonus! Interactive version of the decision-making matrix.

I’ve been messing around with Claude’s new interactive charts feature and thought this might make a good experiment. Below you have the output of that effort. Let me know if this is valuable for you.

Decision-Making Maturity Rubric

Rate your team honestly on each dimension. Pick the description that best matches how decisions are actually made — not how you’d like them to be.

Leave a Reply