“Strong opinions, loosely held” is one of the most useful ideas in product development. I first learned the phrase from Janice Fraser years ago as she, and others, were pioneering the intersection of lean startup, agile, design thinking and entrepreneurship.

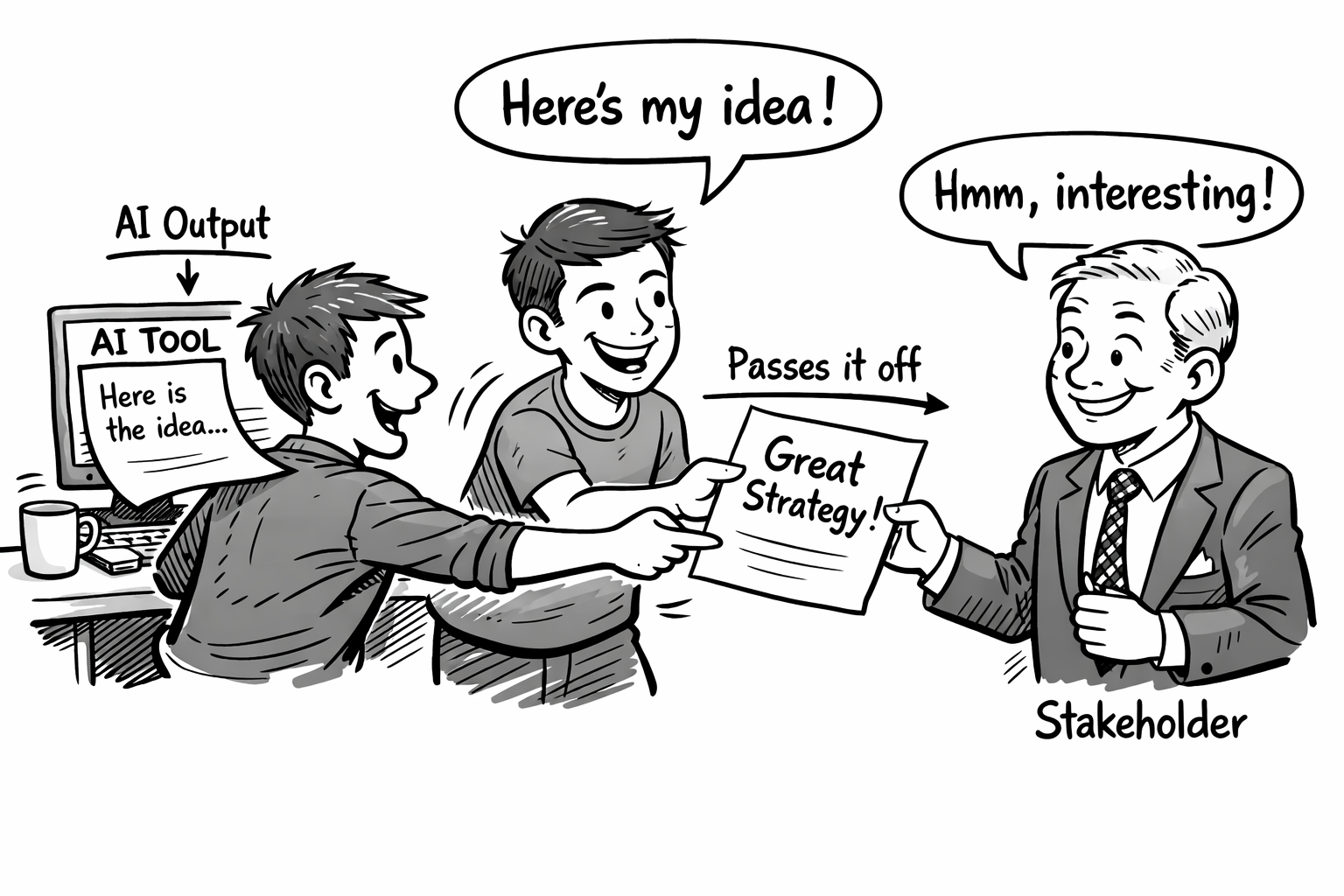

I was reminded of this phrase in a meeting recently with a client team. The lead product manager on a user-facing customer service AI chatbot initiative presented a competitive analysis. She’d clearly done the work. Her presentation was complete with slides, a comparison matrix, and a confident recommendation. When someone challenged one of her findings, she defended her position with conviction. Strong opinion, held firmly.

Afterward I asked her how she’d arrived at the analysis. She pulled out her laptop and showed me the prompt she’d used.

The opinion wasn’t hers. She’d just agreed with it.

Strong opinions, loosely held: what the phrase was actually for

The idea behind “strong opinions, loosely held” is simple: form a strong hypothesis, test it, collect evidence and adjust your “opinion” based on what you learn.

The operative word is hypothesis. A strong opinion, in this framing, is the output of hard thinking and pattern recognition built from experience, sitting with incomplete information long enough to form a view. It’s not certainty. It’s an educated and informed guess.

The “loosely held” part is arguably more important. It means: I’ve thought about this, I’m committing to a direction, and I’m watching closely for signals that I’m wrong.

That’s not just a philosophy. That’s humility in action and it’s how good product managers work.

What changes when the opinion comes from an AI tool

AI analysis tools are extraordinarily good at generating confident-sounding outputs. Give a good model a competitive landscape prompt and it will return something that looks exactly like a strong opinion — structured, specific, reasoned, even nuanced.

The problem isn’t that it’s wrong. It’s often surprisingly on target, or at least plausible. The problem is that the AI tool doesn’t bring any of your experience into consideration. It has no skin in the game, no memory of what you tried last quarter and why it failed, no feel for what your specific customers actually care about versus what the market generally cares about.

A human practitioner’s strong opinion is built from friction. It comes from a meeting that went sideways or a customer who said the opposite of what everyone expected. It’s driven by instinct refined across a hundred similar interactions and decisions.

An AI tool’s strong opinion is built from pattern matching across everything it’s seen. It’s not wrong. But it’s not your experience or opinion.

Here’s what I’ve started to notice. Teams using AI for analysis are arriving at meetings with stronger-sounding opinions, polished presentations and less capacity to defend them when challenged. They’ve outsourced the thinking and kept the confidence. The phrase has been quietly inverted. They’re holding AI-generated outputs firmly, when the whole point was to hold your own views loosely.

The upgrade for the AI era

“Strong opinions, loosely held” needs a new clause for 2026:

Strong hypotheses, human-verified. Rigorously tested.

The AI tool can help you form the hypothesis. It’s genuinely useful for that. It can surface patterns you’d miss, synthesize information faster than you can read it, and give you a starting framework when you have nothing.

But the moment you take that output into a meeting as your view, without running it against customer conversations and your team’s lived experience, you stop asking “what would have to be true for this to be wrong?” And this is a dangerous thing. You’ve mistaken a pattern-matched output for a practitioner’s judgment.

This is precisely what product discovery is designed to prevent. The job hasn’t changed: you still have to form the opinion. The AI just makes it faster to have something that looks like one.

Next time someone hands you an AI-generated analysis, ask them what their opinion is of the findings they’re about to present? Ask them what would have to be right for their point of view to be true? If the answers cause friction, your conversation is headed in the right direction.

That friction is where their actual opinion lives.

One response to “Strong opinions, loosely held — and what that means in the age of AI”

[…] Strong opinions, loosely held — and what that means in the age of AI (13min) We’ve all been in a situation where someone came with a strong opinion to the meeting. But as soon as you start digging, you understand it’s not their opinion, but an AI generated one. And it’s going to happen more and more. AI can help with analysis, yup. But it can’t replace lived experience. And product discovery (aka user research) it what helps turn opinions into hypotheses that can then be tested to improve products. I love this quote: “Next time someone hands you an AI-generated analysis, ask them what their opinion is of the findings they’re about to present? Ask them what would have to be right for their point of view to be true? If the answers cause friction, your conversation is headed in the right direction. That friction is where their actual opinion lives.” Great article by Jeff Gothelf. […]