A couple of weeks ago, South Africa discovered a new variant of the coronavirus and quickly reported it to the World Health Organization and related authorities. Almost instantly, the nations of the world began shutting down traffic of all kinds from South Africa in fear of Omicron. No outbound flights. No inbound flights. Commerce slowed down dramatically.

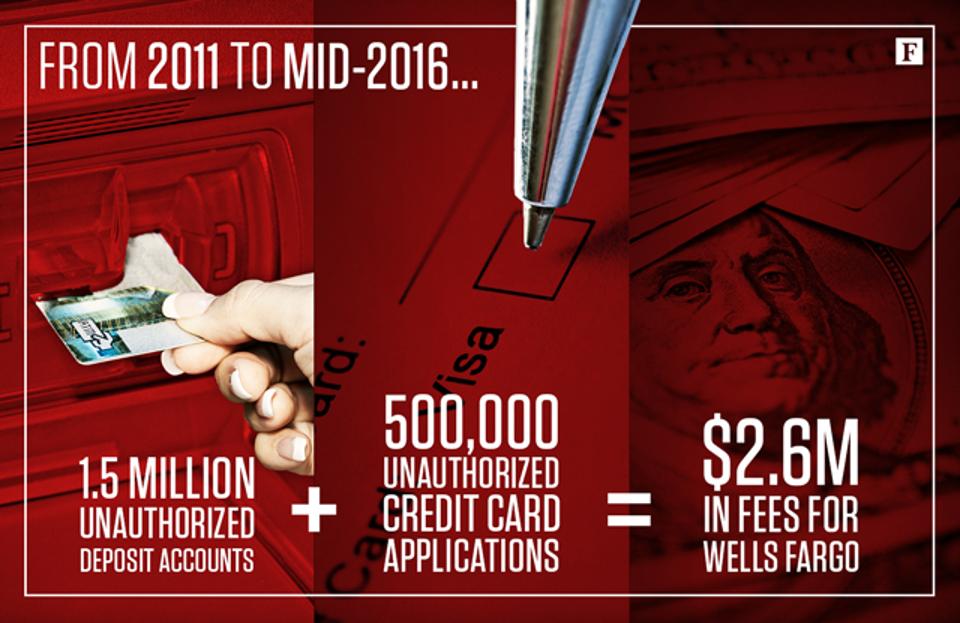

A couple of years ago, Wells Fargo, the big American bank, was exposed for a massive fraudulent account creation scandal by thousands of their employees. It turned out that employees were creating accounts for customers without their knowledge or consent. Thousands of fake accounts were created. Why? Because the bank was running an incentive program that rewarded employees for the creation of accounts. So they did just that. They just forgot to tell their customers.

And it’s well documented that Facebook played a key role in the genocide of the Rohingya minority in Myanmar in recent years. As described on the BSR website, “The use of Facebook to spread anti-Muslim, anti-Rohingya sentiment featured prominently in the Mission’s report.” Why would the UN implicate Facebook in such atrocities? Because Facebook’s design incentivizes users to post hate, anger and racism filled posts. If it draws attention, drives traffic and click throughs, Facebook wants it, even if it means existential threats to the lives of thousands of people.

But we’re managing to outcomes, right?

If you’re a regular reader of this blog you’ll know that I regularly write about managing to outcomes and that our measures of success should be positive changes to human behavior — specifically to the humans that we serve with our products and services. Looking at the three examples above you’ll notice that the measure of success for each of these companies was indeed a change in human behavior. South Africa reported the Omicron variant and was castigated for it immediately. Wells Fargo employees successfully opened up new bank accounts. And Facebook users in Myanmar consumed, posted, shared and commented on tons of content. Facebook’s design encouraged it.

The design of these systems incentivized these behaviors. All of them were intentional and rewarded their creators for driving this behavior. None of them paused to analyze if this was indeed the right behavior or if the incentives were driving negative behavior with real world consequences. In a way you could say these systems “worked as designed.” They achieved the behavior change they sought to create. They hit their OKRs!

If we drive negative behavior we are not successful

This kind of blind adherence to “the metrics” can be extremely dangerous in an interconnected world. As we work to design systems, services, products and experiences we must continuously check for negative real world consequences created by our work. Are we incentivizing the right behaviors? How will we know? While we have “positive” key results to keep an eye on, are there “negative” key results (i.e., outcomes or changes in user behavior) we should also monitor?

Sometimes those negative behaviors will bring us closer to our corporate goals. But if that’s how we define success, are we truly delivering value?