A few months ago, Josh Seiden (co-founder of Sense & Respond Learning) and I realized we were staring at a real problem.

The market had shifted. Our customers — practitioners and their leaders inside large organizations — were still wrestling with the same challenges we’d spent twenty years helping them solve. The fundamentals of good product work hadn’t changed. If anything, in an AI-accelerated world, they mattered more than ever. But it’s not the conversation they wanted to have now. Everyone wanted to talk about AI first. And we hadn’t positioned our business that way — at least not yet.

The old move would have been weeks if not months of deliberate brand work. Interview customers. Audit competitors. Develop positioning options. Test a few hundred dollars of ads to see what resonates. A month or more of careful analysis before we changed a word on the website.

Instead, we spent a couple of days brainstorming. We fired up Claude to identify where our existing curriculum — twenty years of work on product discovery, product management, OKRs, storytelling, and outcome-driven teams — intersected with what the market was suddenly asking for, and where it didn’t. We took those gaps, injected how the fundamentals of good product management are the key to AI success, and wrote new positioning. Two days later, we shipped a new website iteration.

Two days. Not two months. And the early signals across every public channel have been overwhelmingly positive.

I’m not sharing this to brag. I’m sharing it because we didn’t invent this. We’ve been teaching it for years. We just finally had the tools to actually do it this fast. Being wrong about our positioning for two days is survivable. Being six months late to a market shift is not.

You already know this

Here’s what I want to acknowledge before making my argument: you’ve heard some version of this before. Probably more than once from me.

Make provisional decisions. Iterate fast. Treat commitments as hypotheses. AI makes course correction cheaper than it’s ever been — code in hours, analysis in minutes, copy tested in days. The cost of a wrong directional call is no longer a six-month detour. It’s a two-week correction, if that. The intellectual case for faster decision-making isn’t the thing stopping you. You’ve known this for years. Perhaps in the past someone could have made an argument to ignore this approach. Now in an AI-powered world, it’s not optional. So why isn’t it happening?

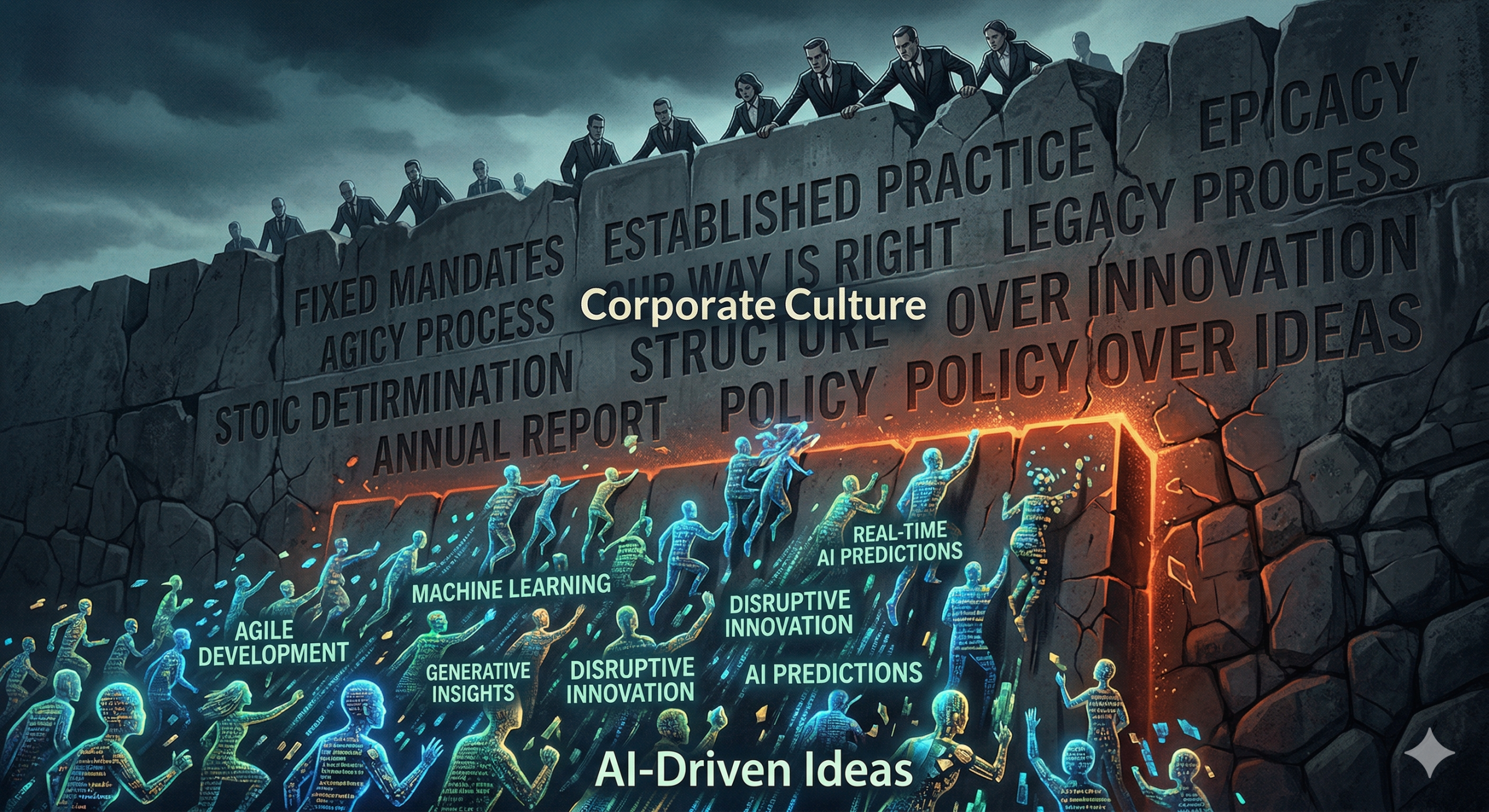

Because your organization is designed to punish it

Think about what actually gets rewarded in your organization.

Leaders who call the right shot get credit. Leaders who make a provisional call, get new data, and change course get questioned. Was the original decision wrong? Did they not think it through? Do they not know how to do their job? The pivot initially looks like a failure even when it’s exactly what smart, fast learning looks like.

Wrong bets get punished. Certainty gets rewarded. And so, rationally, people wait. They schedule another review. They ask for more data. They try to get alignment before committing. Not because they’re slow or afraid — but because the system they’re inside has been optimizing for a world where the cost of being wrong was high. That world still shapes the culture, even as the economics and the technology that drives it change beneath it.

This is the wall AI doesn’t knock down. And without knocking it down, the tools don’t help. If leadership still punishes wrong bets and rewards false certainty, AI won’t produce faster learning. It’ll produce faster shipping of features that don’t add value. The technology amplifies whatever the culture already rewards. If your culture can’t or won’t adapt to current realities AI just makes the existing problems bigger, faster.

What has to change before the tools can help

Safe-to-fail isn’t a technical practice. It’s a leadership commitment. And this is the part that matters most for teams inside large organizations — banks, logistics firms, healthcare companies, energy organizations. You can’t move fast and break things. The compliance and legal teams will eat you for lunch. The brand stakes are real. I know.

But you can build safe-to-fail learning experiments. The distinction matters. A safe-to-fail experiment is deliberate, limited, timeboxed. It comes down, or pivots, the moment you’ve learned what you needed to learn. The goal is not a continuous stream of updates and direction changes — that exhausts your users and your dependent teams. They can’t consume it, and you’ll erode the trust it took years to build.

For this to work, leadership has to name it out loud: we will run experiments, some of them won’t work, and that’s not a failure — that’s the job. Without that commitment, the first team that pivots after a wrong bet will get an earful. And after that, nobody tries again.

Here’s your challenge

Take a decision your team has been circling. Something you’ve been debating, aligning on, waiting to get right before you commit. Ask one question: what could you ship as a safe-to-fail experiment in a single day?

If your organization can’t move in a day, try three. If not three, try five. Expand the timeline as needed — inside a complicated, heavily-dependent enterprise organization, one day might realistically be two weeks. That’s fine. The point isn’t the number. It’s the speed: whatever you’re doing today, do it dramatically faster.

But before you go build something, have a different conversation first. Go to your leadership and ask: if this experiment shows we were wrong, will we be rewarded for learning fast — or questioned for not getting it right the first time?

The answer tells you everything about whether your organization is actually ready to capture what AI makes possible.

Leave a Reply